The New Addiction? What AI Can Teach Us from Social Media’s Mistakes

- Louise Sommer

- Oct 28, 2025

- 4 min read

Updated: 1 hour ago

The scroll that never ends. It’s past midnight. A university student lies in bed, phone glowing in the dark. The thumb flicks upward again - TikTok, Instagram, Snapchat, YouTube. One clip, one post, one algorithmically curated distraction after another. Hours dissolve into nothing. The brain is hooked. Not by accident, but by design.

We know this story from social media. But the question we aren’t asking loudly enough is: what happens when AI begins to do the same, not through likes or scrolls, but through the language of empathy?

Social media promised connection but delivered something else: a hollow substitute. Notifications and feeds simulate closeness, but leave students feeling isolated, fragmented, and overstimulated.

Neuroscience shows us why: repeated exposure to rapid, unpredictable rewards activates dopamine pathways in the brain’s nucleus accumbens, reinforcing compulsive behavior. Over time, these neural adaptations can reduce sustained attention, impair executive function, and erode the very cognitive skills students need to think deeply, persist through challenge, and engage in complex learning.

The cost is visible in the classroom: students struggle to maintain focus, multitask through lectures, skim readings instead of analysing them, and seek immediate answers instead of wrestling with problems.

Deep, reflective learning, the kind that builds reasoning, resilience, and creativity, becomes increasingly rare. Shallow engagement becomes normalized, and curiosity is replaced by instant gratification.

Enter AI. Large language models don’t rely on likes or infinite scrolls. They speak in a language that feels human which are qualities such as empathy, reassurance, understanding. They listen, they comfort, they “care.”

Neuroscience reminds us: emotional salience enhances memory and learning. But when machines provide the emotional cues students might normally receive from peers, mentors, or lecturers, there is a risk: students may confide more in algorithms than in humans, outsource reflection, and lean on AI for cognitive scaffolding they should be building themselves. The brain’s reward circuits respond to simulated empathy just as strongly as to human interaction, and this can reinforce passive engagement and reduce cognitive effort over time.

The stakes for higher education are profound.

Critical thinking is weakened when answers are instantly generated. Persistence falters when students rely on AI rather than grappling with complexity. Mentorship loses depth when human guidance is displaced by machine interaction. These patterns may be contributing to the rising rates of anxiety, depression, and loneliness among university students.

Yet there is hope. AI does not have to replicate the mistakes of social media. We can use this moment to redesign learning environments that strengthen, rather than weaken, human intelligence:

Digital resilience education: Teach students not just to use AI, but to recognise its risks, manage attention, and value authentic presence.

AI literacy for scholars: Train researchers and students to engage critically, reflectively, and ethically with AI tools.

Reward systems for connection: In classrooms and research communities, value listening, dialogue, empathy, and collaborative thinking as much as efficiency or output.

The lesson is clear: the danger lies not in the tool, but in how we integrate it. Social media rewired attention and reward pathways in ways that harmed learning and well-being. AI could do the same. Or, it could help us reclaim the classroom as a place of sustained thought, reflection, and relational engagement.

Neuroscience reminds us that learning thrives when attention, emotion, and social context align. If we design educational practices that integrate AI thoughtfully, preserving human interaction and cognitive challenge, we can strengthen students’ ability to think deeply, persist, and engage meaningfully.

Technology does not decide who we become. The classroom, the learning environment, and the relational presence of educators do. AI is not the crisis. Artificial disconnection (and its subtle, addictive pull) is.

Like my blog the ancient ports of the Mediterranean, where empathy was the currency of survival, we must decide what we value today. Will it be speed, efficiency, and simulation? Or will it be curiosity, sustained effort, presence, and authentic connection in our classrooms?

In the end, technology does not decide who our students become. We do. And as educators and mentors, our role is to create the conditions for deep learning, reflection, and human growth—to guide students in developing the cognitive resilience, critical thinking, and relational skills that AI cannot replace.

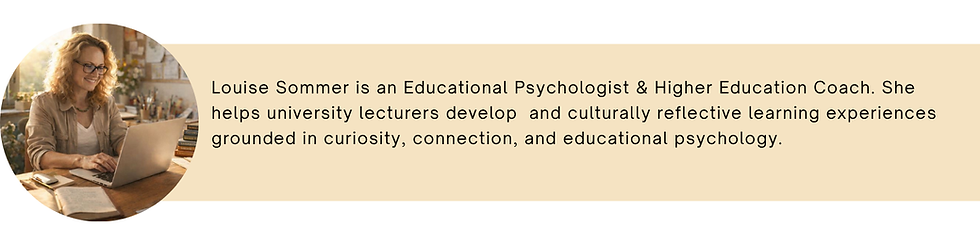

This is where coaching becomes essential. By supporting lecturers to clarify their teaching identity, strengthen relational and pedagogical skills, and design learning experiences that balance technology with human connection, we can ensure that students do not lose themselves to artificial engagement.

My work helps educators navigate these challenges, building classrooms where students are seen, challenged, and empowered to think deeply, persist through difficulty, and engage fully with learning.

The question is not whether AI will shape the future. The question is: are we ready to guide it wisely and cultivate classrooms that truly nurture human potential?

I would love to hear your reflections on this topic. Join the conversation on LinkedIn.

Was this article inspiring?

Share it on social media

Pin it!

Send it to a creative friend who needs to read this